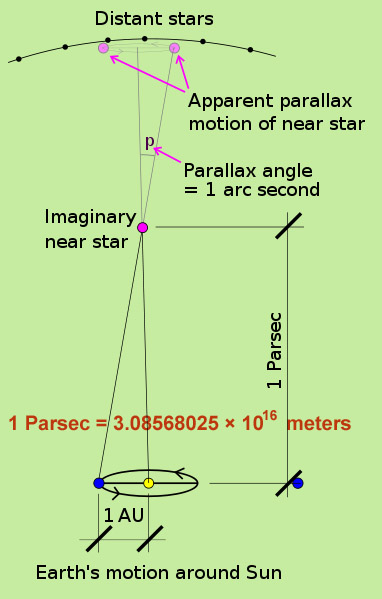

The parsec is defined as the distance at which 1AU perpendicluar to the observer’s line of sight subtends an angle of 1 arc second.

The parsec, a unit of length commonly used by astronomers (along with AU-astronomical unit), is equal to 3.26 light years.

Whats a parsec free#

This is why the parsec is now the preferred unit of distance for all serious astronomy, whilst the light-year is relegated to popularizations and SF.Īstronomers (and astronomy journals) are, I suppose, free to prefer any unit they want, but the logic seems flawed to me. Granted, this may not be an exact equality for all the reasons listed above, but I hardly think it’s fair to call it a “guesstimate”. My copy of the CRC Handbook of Chemistry and Physics includes conversion factors in the definition of “parsec” and says that 3.262 ly = 1 pc. After all, you measure parallax with respect to the “fixed stars”, meaning those that are so far away that they don’t seem to change positions over such a piddly distance as 2 AU.Īlso, it’s not clear to me that the definition of parsec includes a specification of where in the Earth’s orbit it should be measured (remember that it’s not a perfect circle), which makes it a somewhat inexact quantity… and I’d guess that we know the speed of light to a higher precision than we know the length of any arbitrary axis of Earth’s orbit anyway.

Parsecs can be directly measured for relatively near objects, but distant objects don’t show any measureable parallax shift.

Easier still, of course, is that a parsec can be measured directly (measure the parallactic shift at various points in Earth’s orbit, find the line of sight thereby, and invert to get the distance), unlike a light-year, which has to be guesstimated.